Connections Builder

The Connections Builder is designed to be your gateway to the outside world, as well as the place where you can design complex data interactions. It's the Builder that makes it easy to use and modify data, both internal and external.

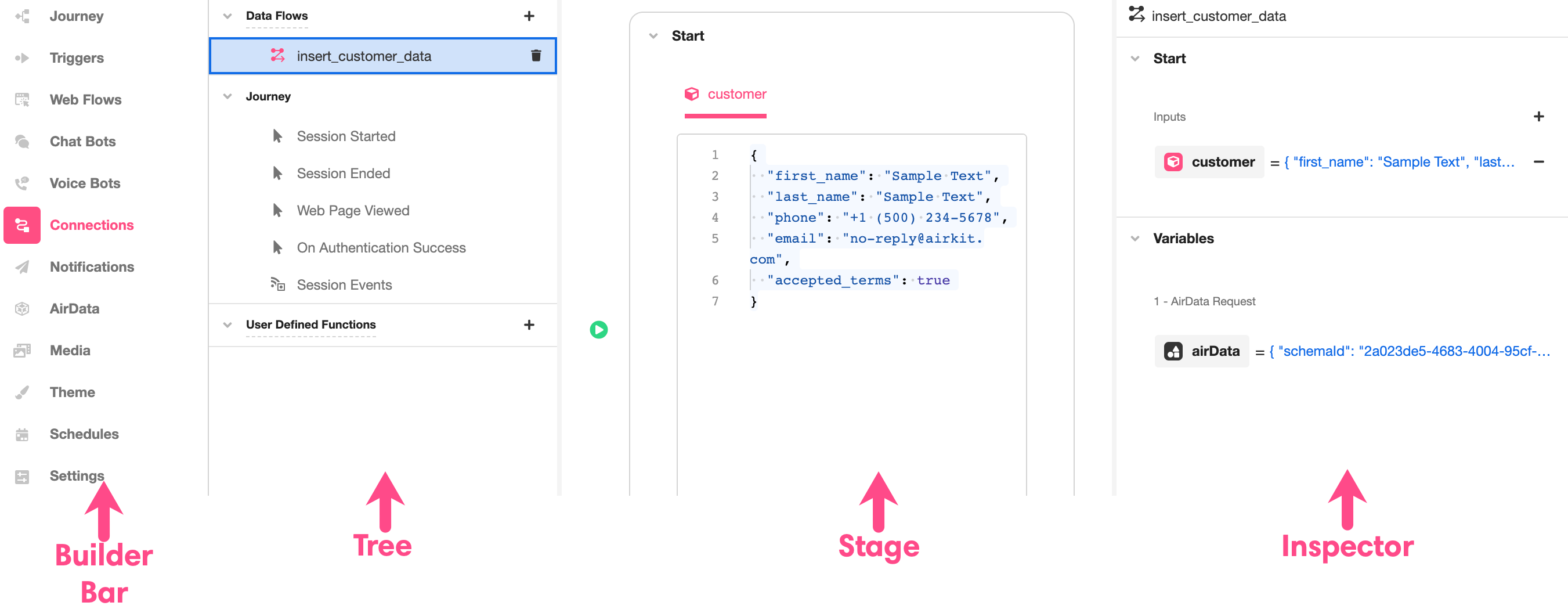

Like most Builders, the layout of the Connections Builder maps comfortably onto the general structure of the Studio: to the immediate right of the Builder Bar is the Tree, to the right of which is the Stage, to the right of which might be the Inspector:

Structure

Tree

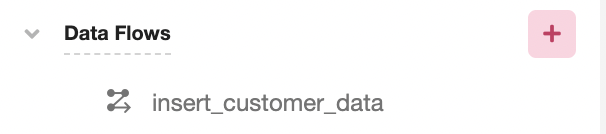

The Tree is where you'll find an expandable and collapsible breakdown of the connections you have built out as part of your app. Airkit automatically splits these connections up into three types: Data Flows, Journey, and User Defined Functions. If you want to create a new connection, click the '+' sign to the right of the type of connection you want to add.

If you want to delete a connection after it's been created, click on the trash icon that appears to the immediate right of the undesired connection when you run your curser over it.

Stage

The Stage displays a visual representation of the connection you are accessing. Exactly what is displayed in the Stage varies depending on the type of connection you are building or editing. In the case of editing an Event under Journey, it will be left blank.

Inspector

The Inspector is where you can examine and modify details associated with with the element being examined.

Connection Types

Data Flows

Data Flows are custom connections. Conceptually, Data Flows work like functions: they take in input, process it, and return the resulting output. Once a Data Flow has been built, it can be initiated as an Action within your application.

Data Flows are made up of component parts called Data Operations, which define how input is processed. Processing input might entail anything from making HTTP requests to sending emails to running another Data Flow. A single Data Flow might consist of any number of interconnected Data Operations, though these Data Operations do not appear nested underneath them in the Tree; building Data Flows out of Data Operations is done in the Stage.

Stage

When working with Data Flows, the Stage is where you'll see and build out the individual connections within. Upon creating a new, custom Data Flow, the Stage appears as follows. The top box, Start, keeps track of the input the Data Flow requires and the bottom box, End, is where you'll declare which of the internal variables the Data Flow will return. Note that, as this newly-created Data Flow is blank, no input is defined, and there are no return values to choose from. Also note that there is no way to add input values within the Stage; keeping track of the variables a Data Flow has access to – including the direct input – is the domain of the Inspector:

The individual Data Operations that the Data Flow consists of go between the Start box and the End box. You can add a new Data Operation between connections by clicking on the '+' icon between them directly in the middle of the stage. Individual Data Operations can be deleted by clicking on the trash icon to their immediate right:

.gif)

Once a Data Operation has been added, its details can be edited directly within the Stage. There are many different types of Data Operations, and how each one appears and can be edited will differ according to functionality. For a closer look into the different types of Data Operations, check out the reference documentation.

In the process of creating and editing Data Operations within a Data Flow, it is a good idea to test how they run to ensure they work as intended. This can be done by clicking on the 'Run' button within a Data Operation. Often, however, a Data Operation requires access to other variables – most commonly, direct input – in order to run. To streamline the process of testing, dummy input can be defined directly in the Stage. Once the required input has been defined in the Inspector, example input of the correct data type is automatically generated. This dummy input can be both examined and edited in the Start box, and when individual Data Operations are run, they will use the dummy values in place of the input variables:

When Data Operations are built out, relevant variables will be auto-generated to account for their output, which can be used as input in downstream Data Operations. The more complex a Data Flow, the more of these variables will be generated, making it important to designate precisely which one is the desired output of the Data Flow as a whole. Once a Data Flow has been fully built out, you can specify which internal variable it will return by selecting the desired output from a dropdown menu in the End box.

.gif)

For more on how internal variables are generated and how dummy values are stored for the sake of testing, see the Inspector section.

Inspector

When examining Data Flows, the Inspector displays the relevant internal variables. These are separated into two expandable and collapsible sections: Start, and Variables.

Start

The Start section in the Inspector is where you can define input that the Data Flow requires. This is done by clicking on the '+' icon to the right of 'Inputs' and selecting the desired data type of the additional input. Upon creation, you will be directed to name the input variable, after which a dummy value of the correct data type will be attributed to the variable for testing purposes. This dummy value will not be accessible outside of the Connections Builder; it will certainly not influence the behavior of the Data Flow when used as part of your app. It will only be used when a value for that variable is required in order to test Data Operations within the Web Flow within the Stage.

The following example shows how to add a number input, named input_number, to a Data Flow. Note that input_number is automatically set to the value '1' for testing purposes. This is not because the number one has any particular significance in the context of this Data Flow; it is only because 1 is an arbitrary Number, and thus makes a passible dummy variable.

.gif)

Upon their creation, dummy variables can be changed in the Stage, in the Start box.

Variables

The Variables section keeps track of all internal variables that are not required as input. (Remember: Data Flows might consist of any number of Data Operations, and Data Operations often produce their own output, which can be used as input for Data Operations downstream.) All variables automatically generated by Data Operations are found under Variables.

The following example shows what appears in the Inspector after a Transform Data Operation has been added but before it has been run. Note that the automatically-generated variable, called transform, is present but undefined:

Once the Transform Data Operation has been defined – in this example, so that it outputs the sine of input_number – and run, the value of transform will change to reflect that:

When downstream Data Operations call upon the transform variable, they will, when run for testing purposes, use the value 0.8414709848078965 – at least until that value changes as a result of the Transform Data Operation being modified and run again to yield a different result.

Journey

This branch of the Tree contains Events that pertain to the overall flow of a Journey, including Starting Events and Session Events. For more on what Events are and their significance within Airkit, see Events.

Out-of-the-box, there are four Events come nested under Global:

- Session Started - triggered when the Journey first begins

- Note that this Event is the only one that comes with an associated Action Chain pre-built. This automates the process of initializing the Actor when Journeys begin with an incoming call or text. For more on Actor initialization, see Conversations with Actors.

- Session Ended - triggered when the Journey ends

- Web Page Viewed - triggered when any Web Page is viewed

- On Authentication Success - triggered when an app is successfully authenticated

Also nested under Journey is the Session Events branch. Custom Session Events nest under this branch upon creation. To make a new Session Event, click on the '+' icon to the right of Session Events in the Tree.

When expecting any Events under Journey, the Stage will remain blank. There will, however, be a populated Inspector, in which the Actions Builder can be used to assign the Event an Action Chain.

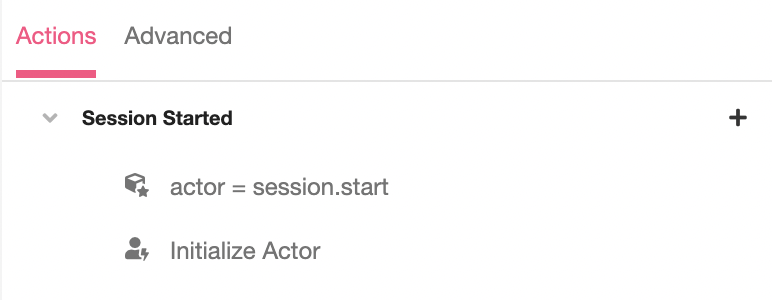

Inspector

When examining an Event under Journey, the Inspector is where you can construct the Action Chain that will be triggered by the Event. This is done under the Actions* Tab. When examining Session Events, the Inspector is also where you can define variables that can be given to the Event as input. This is done under the General** Tab when applicable.

Note that when examining the Event Session Started – the Starting Event – out of the box, it comes pre-populated with an Action Chain, which automates the process of initializing the Actor when Journeys begin with an incoming call or texts:

For more on Actor initialization, see Conversations with Actors.

User Defined Functions

User Defined Functions are custom functions made out of and parsable by Airscript. They can be used as part of an application in the same way as out-of-the-box Airscript functions can. For more details on how to build your own User Defined Function, see Creating Custom Functions.

Stage

When working with User Defined Functions, the Stage displays the Function Body, which dictates the behavior of the User Defined Function in terms of Airscript and the designated input.

Inspector

When inspecting a User Defined Function, you'll find the means to edit the details of the function name as well as the input it expects and the output it will return.

Updated 12 months ago